- Summary

- Okay, here's a breakdown of the provided model data, categorized for easier understanding:

I. Overview - Model Size & Architecture

* Dense Models: These models have a relatively uniform architecture – essentially a large number of interconnected neurons.

* Smaller Dense Models (under 30B parameters):

* Qwen 2.5: Ranges from 32B to 72B parameters. Notable for multilingual capabilities and reasoning.

* Command R: 35B parameters, specialized for retrieval-augmented generation (RAG).

* OLMo 2: 32B parameters, focuses on research with a smaller context window.

* EXAONE 4.0: 32B parameters, leveraging hybrid reasoning.

* Larger Dense Models (30B+ parameters):

* Qwen 3.5: Ranges from 122B to 397B parameters. Significant improvements in quality and multimodal capabilities (vision, reasoning, code).

* Qwen 3: 235B and 480B parameters, featuring large MoE architectures.

* Mixtral 8x7B: 47B parameters. A Mixture of Experts model, meaning it utilizes multiple smaller networks that are activated based on the input.

* Llama 4 Maverick: 405B parameters.

* Mixture of Experts (MoE) Models: These models utilize a collection of smaller, specialized networks ("experts"). Only a subset of these experts is activated for any given input, leading to increased efficiency.

* Mixtral: Available in 8x7B and 8x22B variants. The "x" indicates the number of experts.

* Qwen 3: Various sizes (122B, 235B, 397B, 480B) all utilize MoE.

* Llama 4 Maverick: Utilizes a 128E architecture.

* Qwen 3.5: Various sizes (122B, 397B) all utilize MoE.

* DeepSeek V3.1: 671B uses a hybrid thinking and tool use architecture.

* DeepSeek:

* DeepSeek V3.1: 671B, with a hybrid approach to thinking and tool use.

II. Key Features & Capabilities

* Multilingual: Several models (Qwen 2.5, Qwen 3.5, Qwen 3) are specifically designed for multilingual performance.

* Reasoning: Many of the larger models (Qwen 3.5, Qwen 3, Llama 4, DeepSeek V3.1) demonstrate strong reasoning abilities.

* Code Generation: Qwen 3, Qwen 3.5, and the Qwen 2.5 models are optimized for code generation.

* Multimodal: Qwen 3.5 and Qwen 3 are multimodal, capable of processing both text and image inputs.

* Retrieval-Augmented Generation (RAG): Command R is optimized for this.

* Tool Use: DeepSeek V3.1 improves on tool use.

III. Model Size Comparison

| Model Name | Parameters (Approx.) | Context Window |

| ------------------------ | -------------------- | -------------- |

| Qwen 2.5 (32B) | 32B | 2048 |

| Qwen 2.5 (72B) | 72B | 2048 |

| Command R (35B) | 35B | 2048 |

| OLMo 2 (32B) | 32B | 4K |

| Mixtral 8x7B | 47B | 2048 |

| Mixtral 8x22B | 141B | 2048 |

| Qwen 3 (122B) | 122B | 2048 |

| Qwen 3 (235B) | 235B | 2048 |

| Qwen 3 (397B) | 397B | 2048 |

| Qwen 3 (480B) | 480B | 2048 |

| Qwen 3.5 (122B) | 122B | 2048 |

| Qwen 3.5 (397B) | 397B | 2048 |

| Llama 4 Maverick (405B) | 405B | 128K |

| Qwen 3 Coder (480B) | 480B | 256K |

| DeepSeek V3.1 (671B) | 671B | 128K |

Notes:

* Context Window: Refers to the amount of text the model can consider at once. Larger windows generally allow for more coherent and contextually aware responses.

* "A3B" indicates the use of "active" experts in the Mixture of Experts architecture.

* The information is based on the data provided. Specific details about training data, fine-tuning, and performance metrics would require further investigation.

To help me provide even more focused information, could you tell me:

* What are you specifically interested in knowing about these models? (e.g., comparison of their reasoning abilities, best model for a particular task, etc.) - Title

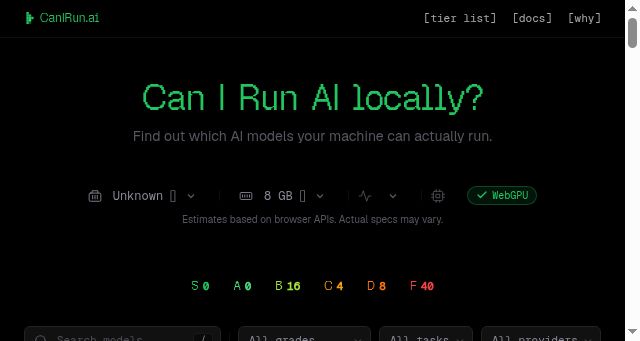

- CanIRun.ai — Can your machine run AI models?

- Description

- Detect your hardware and find out which AI models you can run locally. GPU, CPU, and RAM analysis in your browser.

- Keywords

- architecture, memory, chat, reasoning, active, year, code, model, vision, mistral, gemma, llama, open, context, edge, google, month

- NS Lookup

- A 172.67.201.155, A 104.21.44.173

- Dates

-

Created 2026-03-14Updated 2026-03-14Summarized 2026-03-14

Query time: 933 ms